The CEO of Depix, Philip Lunn, explained to us why generative AI has made traditional rendering obsolete, how his team is compressing the entire pre-CAD design process from weeks into minutes, and what happens when designers are no longer resource-constrained.

Real-Time Rendering for Quick Decisions

What is the problem you’re out to solve?

I’ve wanted to solve the same issue since 2003 – one of wanting to visualise an idea with enough clarity that would enable making a decision, fast – through real-time rendering for design. That was the goal and I’m still doing the same thing.

Back then I founded Bunkspeed. We started with a real-time game engine, taking a CAD wire file, tessellating it, materialising it, putting it in a world with physics so you could drive your car around with a PlayStation controller.

Then in 2005 or so we added real-time ray tracing. That became Hypershot, which became the standard for industrial designers to visualise their products. Hypershot used the file format .bip that stood for “Bunkspeed Interactive Photograph”, which was a selling point at the time. In 2010, Hypershot became KeyShot. It’s still the standard today, and still using the same .bip file format.

The reason it was so successful was because it simplified getting a high-fidelity image to either make a decision or sell your product. Now with generative AI, that whole process has been flipped on its head.

There’s a philosophy that states the best way to stay relevant is to constantly think about how to make yourself irrelevant. Sounds like you’ve done exactly that with Depix.

Very true. Otherwise you get stuck in the Innovator’s Dilemma. You innovate something, become successful, and then you’re stuck because how do you reinvent yourself? But the companies that have been around the longest reinvent themselves all the time. If you don’t, you will be reinvented out of business. And this is a big reinvention.

What does a typical product design team’s visualisation workflow look like today?

Sketching. That’s how it begins. You make a bunch of design iterations on a sketch, refine it, present it. Somebody comes in and picks the ones to take further. That sketch gets handed to a modeller. The modeller builds a 3D model, works with the designer, materialises it, renders it. They’ll do three or four versions. Maybe eight to twelve people working away building these 3D models.

In the automotive industry, they’ll then visualise those and pick a few to mill out as physical models. They’ll put them on pedestals and study them. Then they pick one, make it full size in clay, hand-sand it, scan it, resurface it and bring it back to engineering. That process has been the same since the digital model came out 50 years ago.

Your website talks about safe designs winning and limited volume for product ideation. Tell us more about this.

Every company has the same issue of limited resources. You have ideas and you want to generate options for those ideas, but everybody has a resource problem, all the time. That’s the biggest thing we solve – scarcity of resources. Now one person can do the work of ten or twenty. The chief designer gets a much broader array of concepts to curate. They’re not resource-constrained anymore.

And if you look at it from that point of view, this is the greatest thing to happen to product design. It doesn’t eliminate jobs, it gives you more resources. The constraint shifts downstream to manufacturing and engineering. But AI is permeating those processes too.

From Intent to Product, Without the Technicians

What does Depix do?

You don’t need to start with 3D geometry anymore. You can start with just an idea. You type in your idea, it generates a series of images, you choose one, modify it, generate a 3D model, then make changes to features and explore variations. We’ve found you can do that whole process in minutes. From a spark of an idea to a finished visualisation that’s resolved enough to decide whether you should build it or not.

We call it pre-CAD. Before you start doing the 3D model and the engineering, we compress everything that used to take weeks or months into minutes. Then the next step is the engineering portion, which is the big laborious piece that still hasn’t been fully automated.

Can you explain the different products under the Depix umbrella?

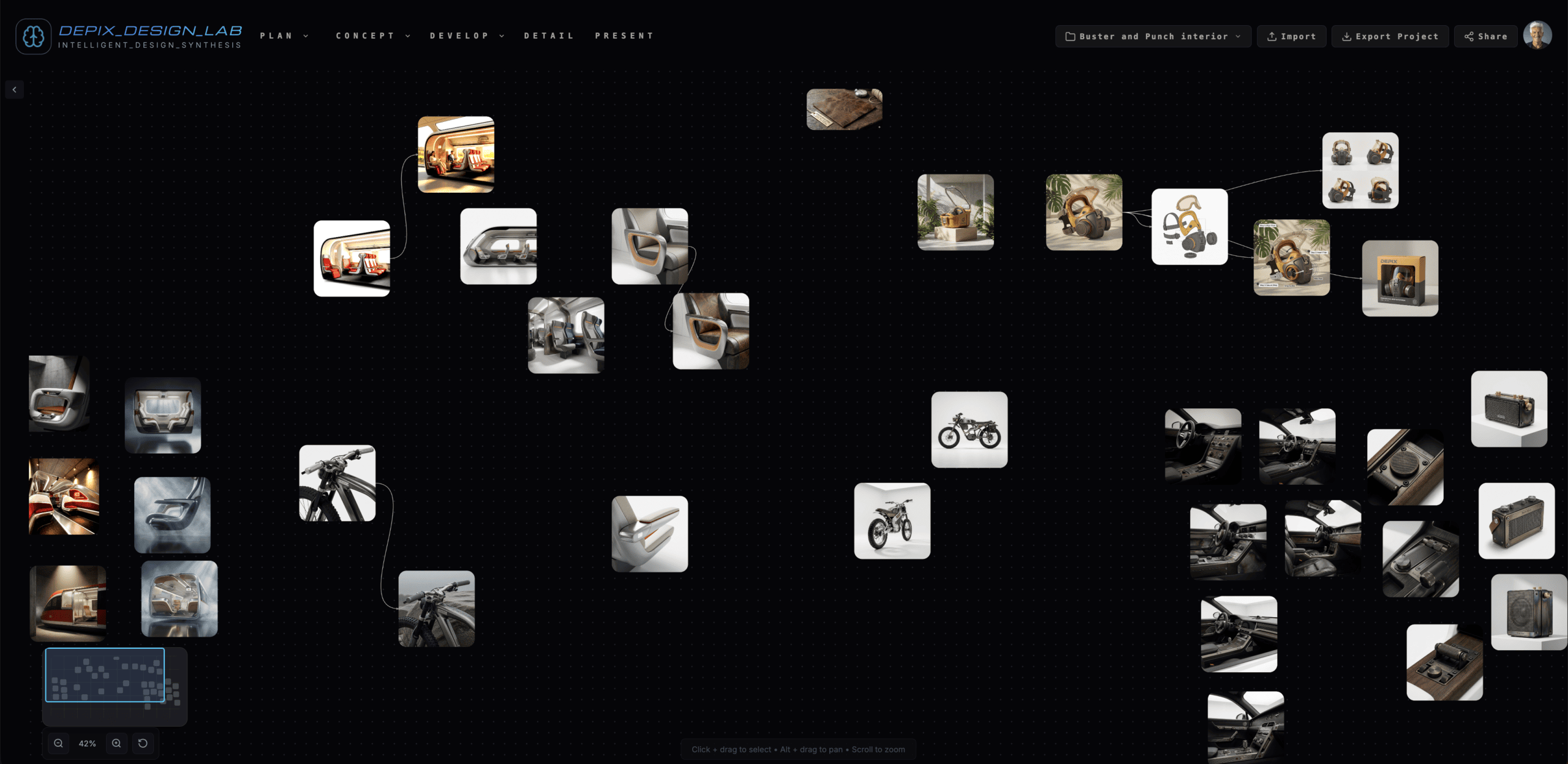

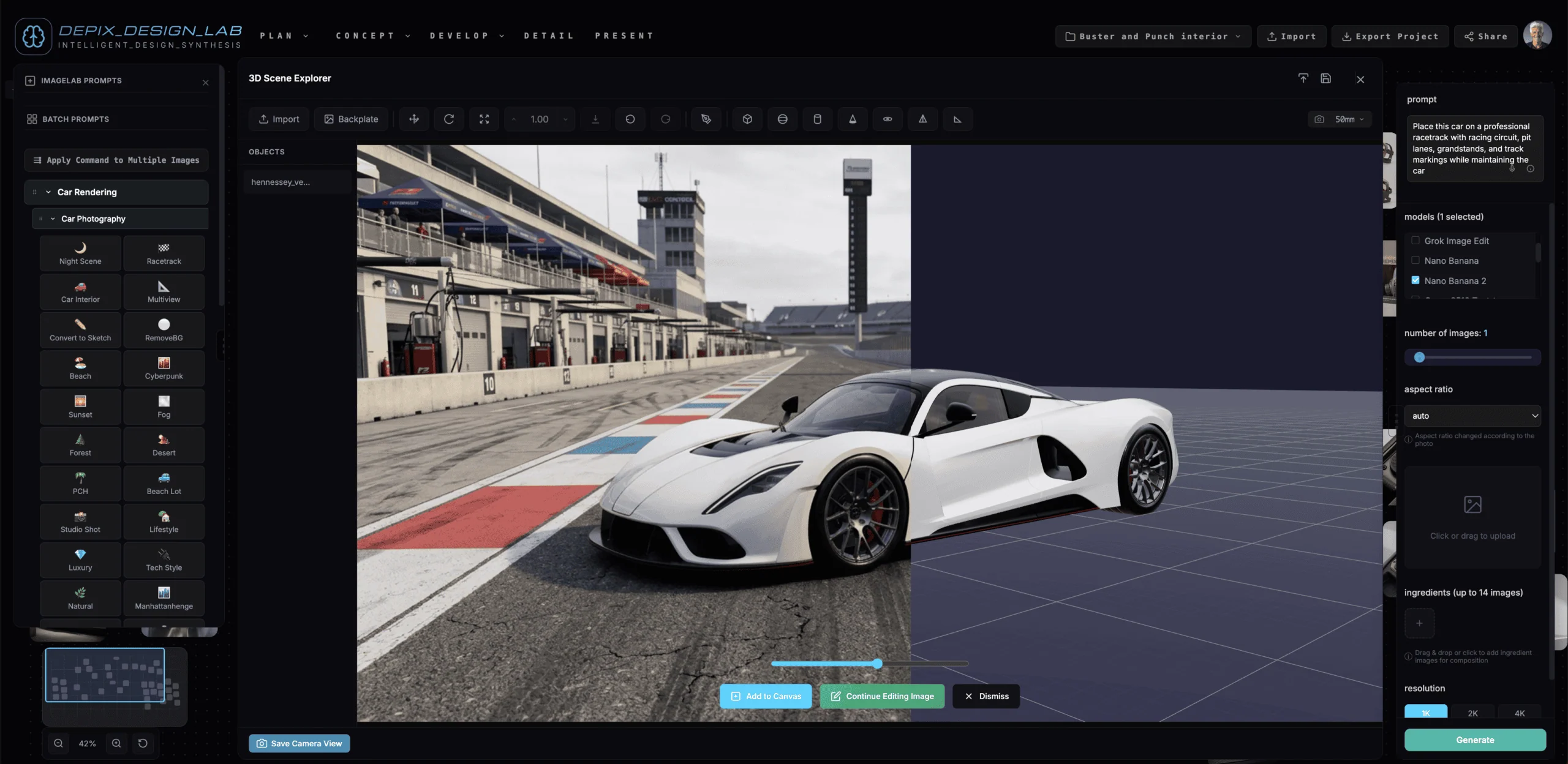

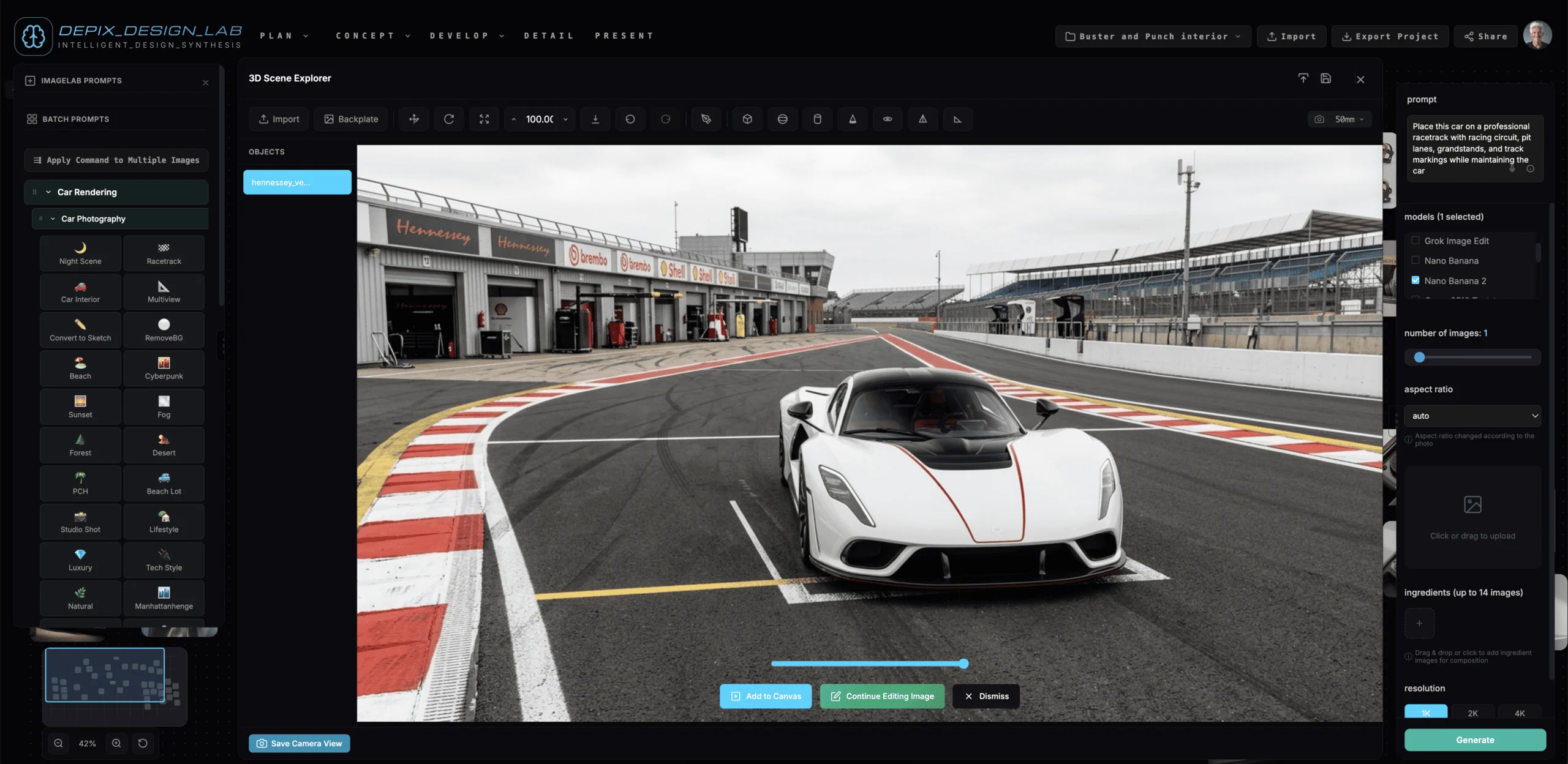

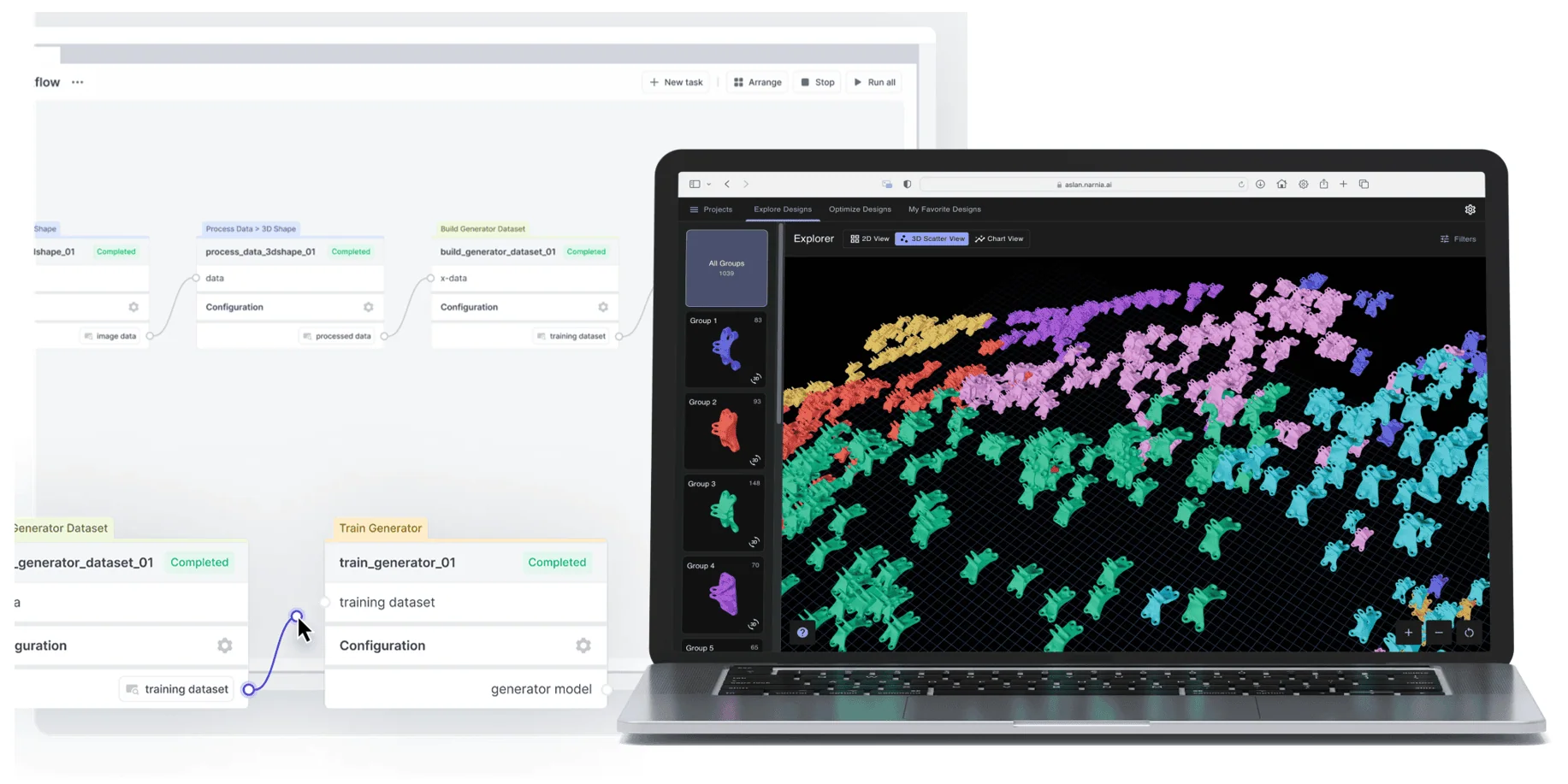

Design Lab is the orchestration layer. It’s an infinite canvas where you bring images in, work with hundreds of preset prompts for generating variations, and launch different agents from there. You can bring in a 3D model and visualise it like you would in a traditional package, but everything uses generative AI instead of ray tracing.

Product Vision is an early-stage idea agent. You put in an idea, say “luxury barbecue, Salvador Dali,” and it generates some fantastic designs. But you don’t even need a prompt. You literally put in an idea of what you want to make and it goes out, does market research, synthesises that research and creates creative concepts.

Then there’s the CMF tool for colour, materials and finishes. You put in your product, it searches the market for current colour trends, synthesises them and generates materials and finishes that you can apply to your image. It compresses weeks of CMF work into minutes.

And Product Shape, our 3D model generator. You take an image, generate a 3D model, and edit feature lines with Bézier curves. If you’ve got a fender you want to move up a little, you put down a curve, adjust it, bring the 3D model back in and re-render it using AI.

Can you give me a concrete example of the workflow?

My partner Chris Braun, he was 20-plus years head of visualisation at Porsche Design. He typed “Mercedes tail light” into Product Vision. It produced a few versions. He brought them into Design Lab, made a couple of variations on the image, hit the Animate button and it generated animated lights doing a pattern. The whole thing took minutes.

He posted it on LinkedIn and it blew up. And if you think about it, that tail light doesn’t exist. It may be a variation on five different real ones, all combined into something new. But that’s what designers do. They look at things, combine influences, create something new. This just does it much faster.

Let’s talk about the “creating something new” part. If I don’t input my own sketch, won’t the output just look like what already exists?

You’d think that, but it’s actually not the case. You can get very creative results for things that don’t exist at all. People say AI is not creative, but it’s creative in the same way that humans are. Designers study the arts, they do market research, they look at competition. AI does the same thing, it’s just much faster. You can be as radical or as similar as you like. The designer becomes the curator instead of the technician.

How much control does the designer have over the output?

You can start with a sketch if you want, import it and iterate. With Product Vision we have controls for guiding certain elements. A small word change can steer you in a new direction, add your logo, adjust things. You can’t yet say “raise that line two millimetres”, but we expect that level of constraint-based control to come. Our vision is that you describe your intent in detail and the system pulls all that information together.

Rick Rubin, the music producer, has said he gets paid for the confidence in his taste. Is that where product design is heading?

That’s exactly what’s happening. Chief designers of any company are paid to produce something tasteful and attractive that will be desired by others. It’s always been that way. But in the past it required a big army of people to support that vision. “I have 150 people who produce ten ideas to a state where I can look at them and decide.” That still happens, but now the time is compressed to almost nothing. And the person being paid for their taste doesn’t need technical skills in 3D modelling or rendering anymore. AI makes people who are good 10 times better. But if you’re not good, you’re done.

Automotive First, Any Product Next

Who is using Depix today?

Ford, Nissan, Lamborghini, Porsche. I went back to my old clients and said “I’m going to build this thing, will you buy it?” and they all said yes. We also have product design firms. We’re still relatively new in our product offering and we’re launching a whole new integrated suite very soon.

What drives adoption?

The results. Mostly the early adopters have been experimenting, and our goal is to move from experiment to enterprise-wide. We’re there with one company now. Enterprise adoption is very slow for this kind of technology in general.

We see any company that manufactures a product as a potential client. More specifically companies that are already doing this design work, mostly with KeyShot. We’re just the new way of doing that same work faster.

And what does adoption look like at the moment?

2026 is the first year we’re seeing real adoption. 2027 is when people will go, “this is a new way of doing business and we have to do it.” And if you’re not thinking about it now, there’s going to be some company that eats your lunch.

Design to Manufacture in a Day

You must have come up with some pretty cool products just testing your own software. Does anything stand out?

Every now and then something comes out of our machine and we’re like, “we should build that.” Chris made an office chair that was just so cool, I’d never seen anything like it. I did a reverse Google image search and found nothing similar.

We’ve actually started a collection internally, we call it the Stealth Product Company. We just drop these designs in there. Eventually we may become a product manufacturing company, which I think would be the right thing. We’d need a manufacturing partner, and we’ve already considered getting quotes on some of these.

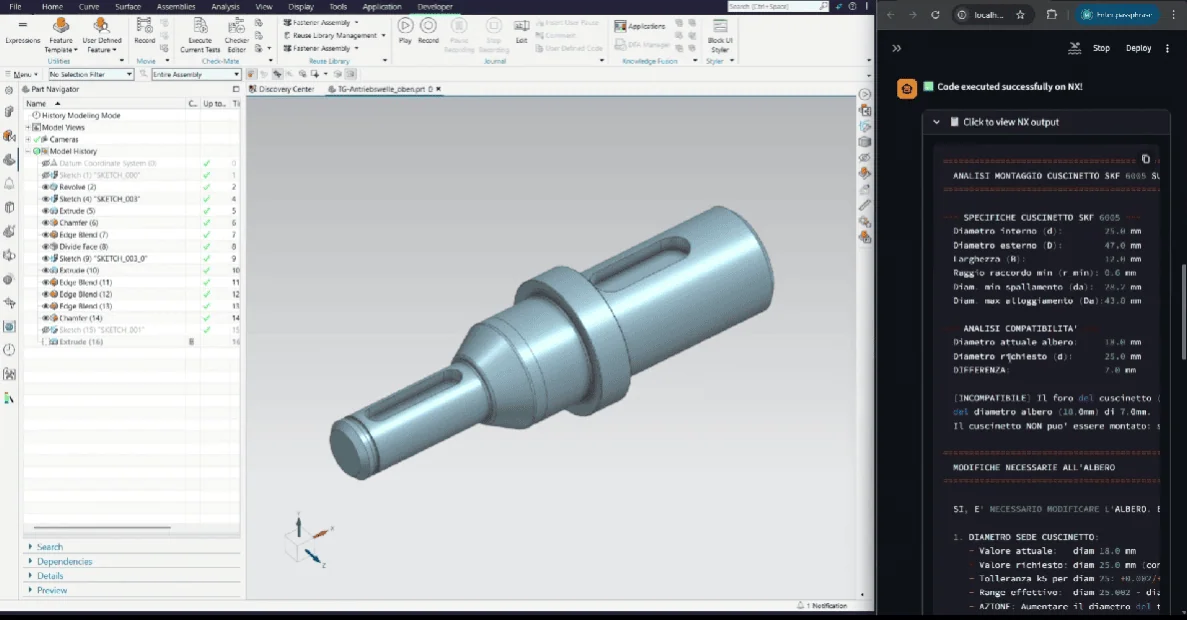

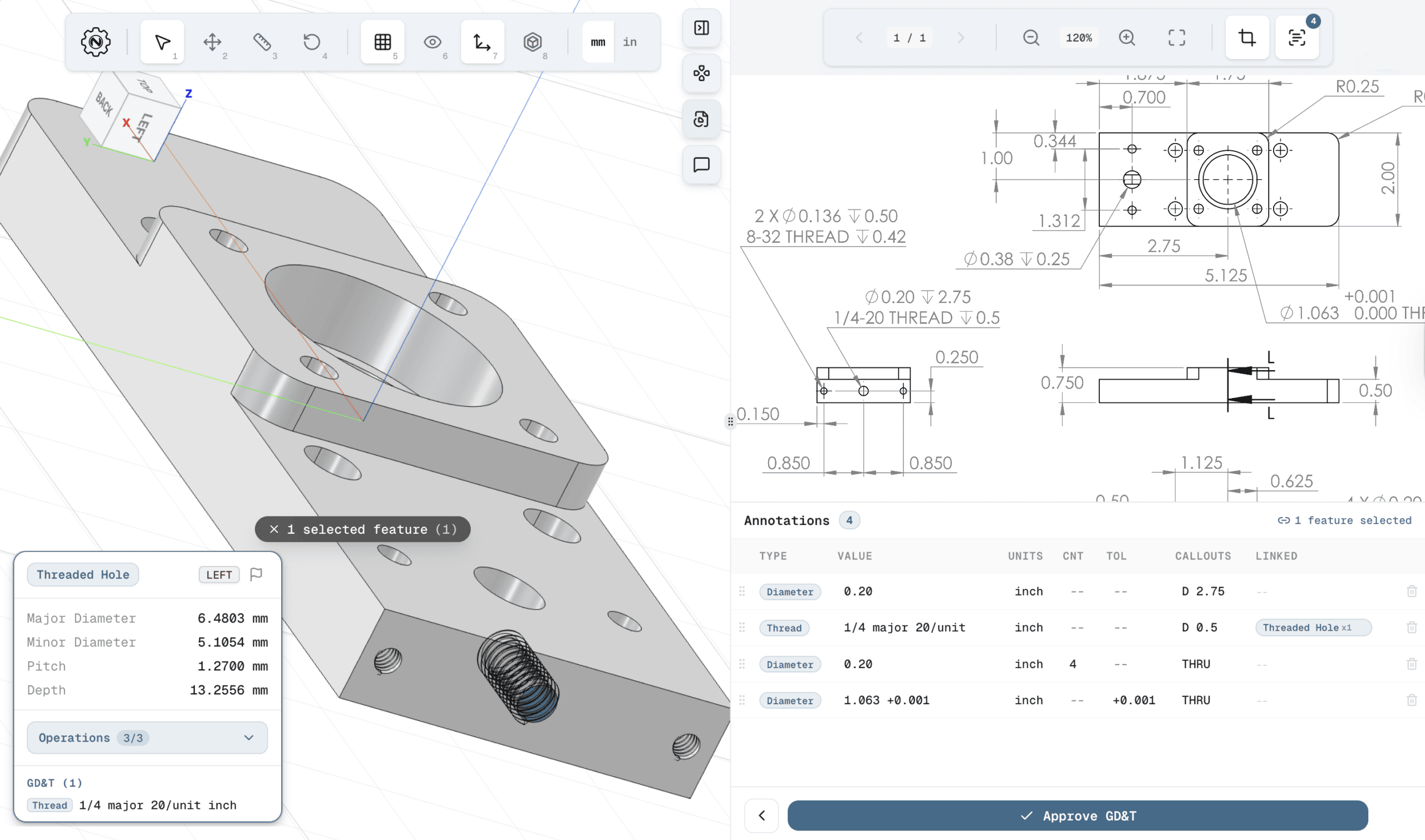

Most tools in our interview series operate in manufacturing and detailed engineering. Depix sits much earlier, at conceptual design. Do you see a path to actual engineering outputs?

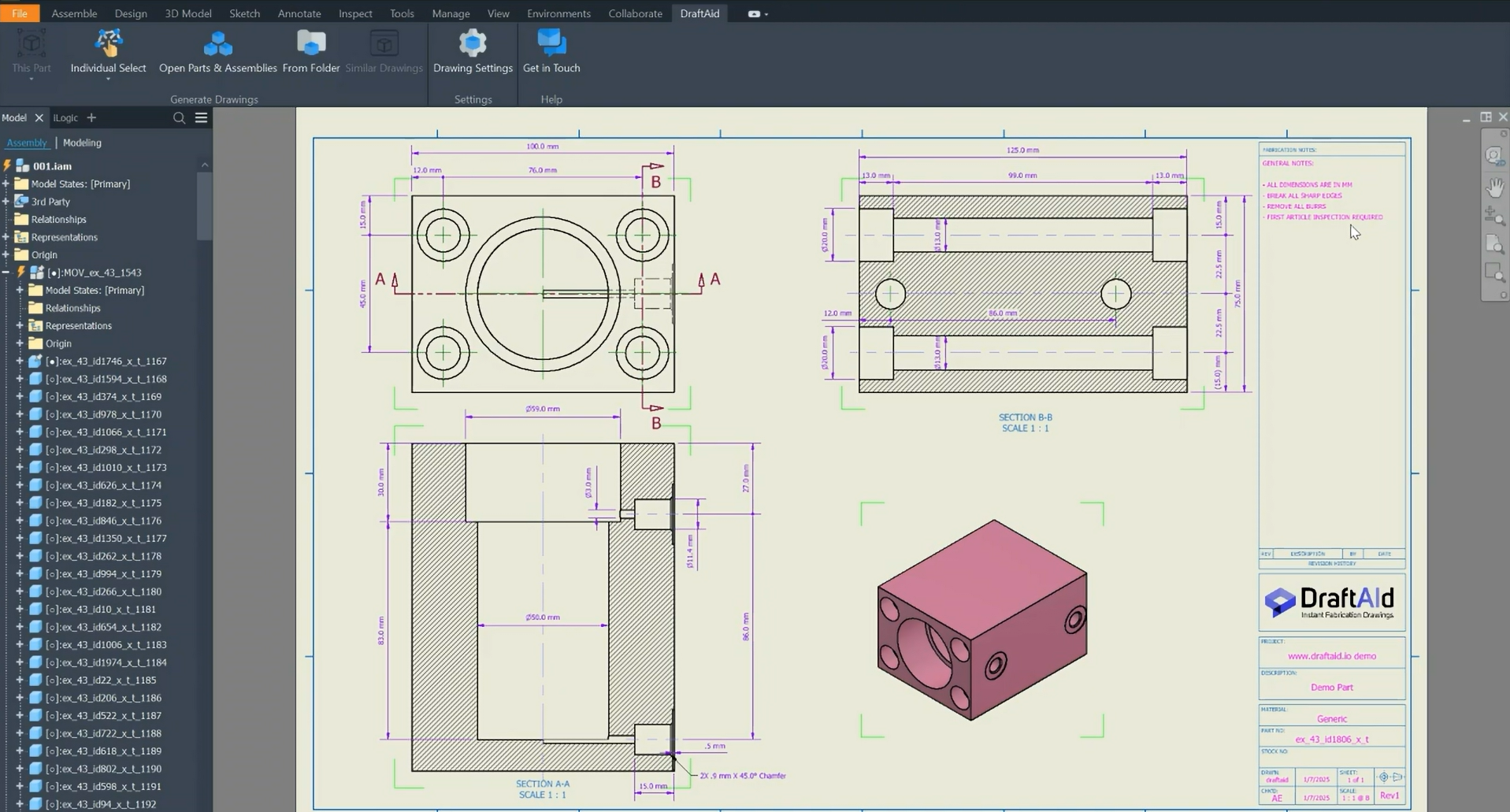

Absolutely. Our vision is from idea to product-ready-to-manufacture. Full set of plans, everything, send it off to a manufacturer. That’s the dream. And I was interested in what Bench is doing because they’re basically saying you give it a drawing and it generates the 3D model ready for engineering. That’s exactly the next step after what we do.

Because we’re already an agentic system, we expect Design Lab to manage third-party agents as well. You’ll have a fluid dynamics agent, a stress analysis agent. We’re going to plug those into our system. Design to manufacture in a day. That’s our goal.

There’s been slow adoption of AI in design. Why?

The MIT report says 85% of all AI projects fail. My belief is they fail because they’re sabotaged, not on purpose. The people put in charge to evaluate these tools realise it’s a radically new way of doing things and it scares them. They find some reason to say it doesn’t work. Not until the boss who understands they’re resource-constrained says “I need to add resources but I don’t have a budget for, and now I have a solution” does everything change.

DeepMind’s CEO Demis Hassabis said this is ten times bigger and ten times faster than the Industrial Revolution. And if you’re not thinking about it now, the Chinese are in the fast lane for everything that’s made. If we don’t make this transition in the West, we risk being relegated from manufacturing entirely.

Any interesting AI companies in engineering you’d highlight?

A good example of what’s happening in engineering for AI is Leo.

Europe

Europe  Türkiye

Türkiye

Comment(0)